by Maria J C Machado. In late September, OASPA (Open Access Scholarly Publishing Association) held a conference on Open Scholarship that I attended. A lot of ideas were born from this event, and I would like to share some with you on what struck me as the most pressing issues related to peer review. Please get back to me with any comments or catch me and Gareth on our Ask Us Anything events on LinkedIn Live.

I will focus on two main panel discussions:

- Preprints: Supporting Open Peer Review and Global Preprint Adoption Trends

- Transforming Research Integrity: the growing potential of open science practices

Today, I will address the second discussion topic, which was chaired by Andrea Chiarelli (Research Consulting). This panel featured the presentation of a study by Clarissa F. D. Carneiro (BIH Quest). The other talks were by Roohi Ghosh (CACTUS, new chef of the Scholarly Kitchen), Matt Hodginkson (UKRIO, who quoted the X-Files ??), and Catriona MacCallum (Hindawi).

??? In Clarissa’s presentation, we find that reproducibility is in crisis within biomedical sciences. Pharmaceutical industries found that a shockingly minute amount (only approximately 11%) of the scientific findings in academic-led preclinical studies could be replicated in an industrial setting. This is concerning because these studies are what inspire the industry to develop drugs, which are then tested in humans via clinical trials. The reasons for this resonated with the in vivo physiologist in me: poor experimental design, incomplete/selective reporting, and suboptimal quality assurance.

These are all issues that could have been spotted by competent and careful peer review. Some of the proposed solutions focused on reducing the burden on time-starved researchers, which I agree could liberate them from administrative tasks to focus on volunteer scholarly input tasks, such as peer review. However, what is their motivation to do so? We will circle back to this…

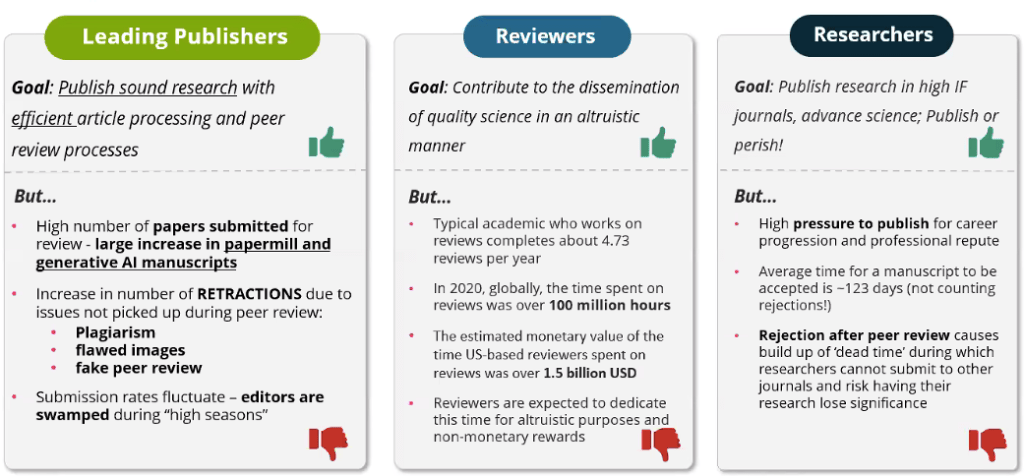

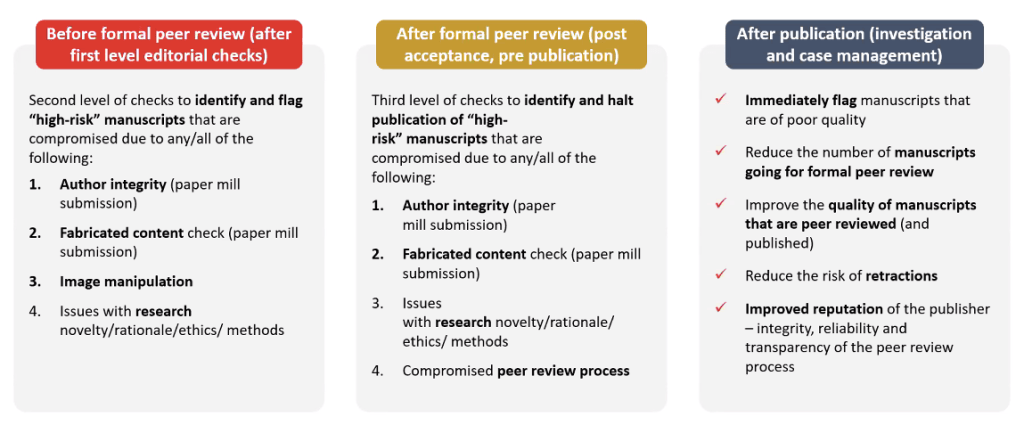

? Roohi talked about threats to research integrity, from manuscript reviewers’ perspective. Aside from plagiarism, which is ever more successfully caught by AI, AI is also the most severe concern, with ChatGPT and other generative text tools increasing the volume of fake papers produced by paper mills. However, unethical research practices, cherry picking, or salami slicing—issues that have yet to be recognised by AI, I believe—were also highlighted.

What struck me was the amount of time spent by researchers on peer review (see above). How open science can help with addressing integrity issues seems to once again rely on researchers and their unpaid labor. That’s a lot of pro-bono work! Are these researchers recognised by their institution? May they use this work to advance their career?

Roohi touches on the importance of recent developments, such as the early sharing of preprints and collaborative peer reviews. (I will dig deeper on a whole session on preprint peer review that happened earlier.) Nevertheless, it became obvious that experienced peer reviewers have the best skills to help with integrity checks.

??♂️ As most academics, Matt Hodgkinson started with facts: since 2000, when prospective registration of primary outcomes started to be needed on ClinicalTrials.gov (2000), the landscape changed. Large clinical trials assessing drugs for the treatment or prevention of cardiovascular disease became much more likely to report null findings.

This example served as the springboard for a discussion of the possible causes for the distrust of science that became rampant in the general population. Importantly, named peer review carries a fear of retaliation, but Matt reminds us that transparency is only one of the five pillars of research integrity. Publishing models where review reports are published anonymously alongside the manuscript uphold rigour and respect (as well as honesty and accountability) and may become the norm in the future.

Double-anonymised peer review is fairer, more equitable, and less biased. The debate regarding who benefits from open peer review is ongoing. Although I perceive this process as a great teaching opportunity (getting ahead of myself here…), pinning one’s name and ORCID to a review report may not be every early career researcher’s idea of a good professional move.

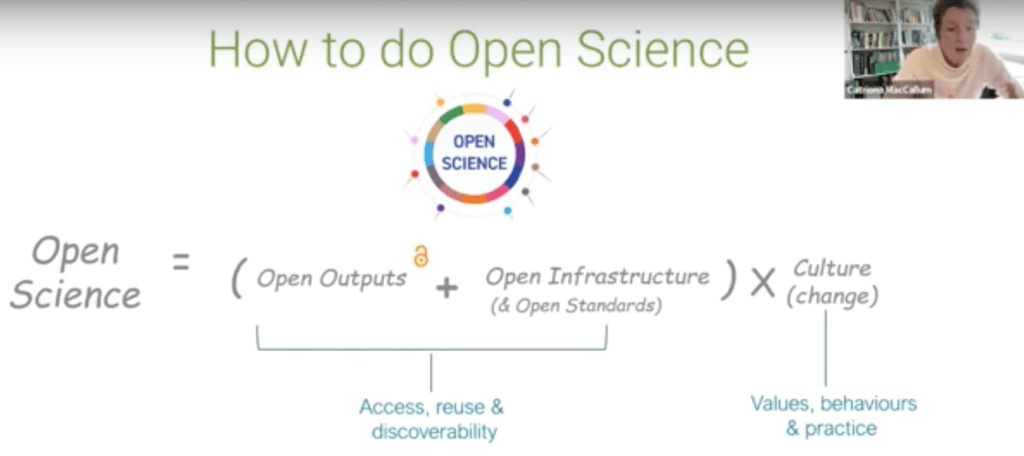

??? Catriona MacCallum has been in the eye of the storm, as head of Open Science at Hindawi. The scale of publication manipulation has revealed itself to be bewildering. Nevertheless, she defended that the principles of open science (as disseminated by UNESCO) defend researchers from bias and promote equity. Could this truly be the case? What drives the reproducibility crisis, mistrust in science, and mistrust in publishing? OASPA’s own blog by Malavika Legge concludes that ‘open access is leading to closed research’.

How to do open science

According to Catriona, the publish or perish economy where researchers reside, together with false proxies of quality that are maintained by funders and institutions, led publishers to shift from a journal-based economy (subscriptions or pay to read) to an article-based economy (APCs or pay to publish).

Having an evidence-informed approach seems to be the obvious start. We are striving towards that, as the results of three large-scale projects on meta-research funded by the European Commission become available. TIER2, iRISE, and OSIRIS address how open science is connected to reproducibility and integrity.

With the suggestion that peer reviewer manipulation could be spotted by detecting plagiarism across review reports and across publishers, the panellists place great importance on these reports, elevating them to the level of scrutiny of the publications they are assessing. Maybe future academic CVs will include lists of published review reports alongside publication lists. Training for editors and reviewers then emerges as an essential prerequisite for capacity building.

Open Access, Open Science, and Peer Review: ‘Trust No One’ written by by Maria J C Machado.